Research Projects

Intelligent Agents and Deep Search / Deep Research (智能体与深度搜索/深度研究)

The explosive growth of complex, knowledge-intensive information needs has exposed the limitations of traditional single-query, one-shot information retrieval. When a user faces a multifaceted question—such as comparing financial strategies, surveying a scientific field, or making a legal argument—simply typing one query into a search engine is woefully insufficient. Humans in practice iterate: they search, read, reason about what they found, refine their questions, search again, and ultimately synthesize a comprehensive answer.

Zhicheng Dou's most prominent and rapidly expanding research direction aims to replicate this expert-level iterative process by building deep search and deep research agents powered by large reasoning models. The core motivation is to move beyond passive retrieval toward active, agentic information seeking where an AI system autonomously plans multi-step search strategies, retrieves and reads web pages, reasons over the gathered evidence, and produces well-grounded reports. Key achievements in this area are numerous and highly impactful. WebThinker (NeurIPS 2025) empowers large reasoning models to interleave deep thinking with iterative web search during their chain-of-thought process, receiving HuggingFace Daily Paper #1. Search-o1 (EMNLP 2025) introduced agentic search-enhanced reasoning by letting models autonomously decide when and what to retrieve during reasoning. HiRA (SIGIR 2026) decouples planning from execution via hierarchical reasoning, and DeepAgent (WWW 2026) extends the paradigm to general-purpose reasoning agents with dynamically scalable toolsets. On the training methodology side, ARPO (ICLR 2026) proposes Agentic Reinforced Policy Optimization specifically for training agents, ranking #1 on HuggingFace Daily and Weekly Paper charts. Tool-Star (SIGIR 2026) empowers multi-tool collaborative web agents via reinforcement learning, while Tool-Light (ICLR 2026) advances self-evolved preference learning for tool-integrated reasoning. The group has also built domain-specific deep research agents including FinSight (ACL 2026) for financial deep research, LawThinker for legal reasoning, and HierSearch (AAAI 2026) for enterprise search integrating local and web knowledge. Supporting infrastructure works include LASER for governing long-horizon agentic search, SmartSearch (SIGIR 2026) for process-reward-guided query refinement, Agentic-R (ACL 2026 Findings) for learning retrieval strategies, and a comprehensive survey on Memory in the Age of AI Agents.

复杂且知识密集型信息需求的爆炸式增长,暴露了传统单次查询、单轮信息检索的局限性。当用户面对一个多维度问题——例如比较金融策略、调研某个科学领域或构建法律论点——简单地在一个搜索引擎中输入一个查询是远远不够的。

人类在实践中会采用迭代方式:他们搜索、阅读、思考所找到的信息、优化问题、再次搜索,并最终综合出一个全面的答案。

窦志成教授最近聚集该研究方向,旨在通过构建基于大型推理模型的深度搜索与深度研究智能体,来模拟这种专家级的迭代过程。

其核心动机是从被动检索转向主动的、具备智能体特性的信息寻求,在此过程中,AI系统能够自主规划多步搜索策略、检索并阅读网页、对收集到的证据进行推理,并生成有据可循的报告。

该领域已取得众多且极具影响力的关键成果。WebThinker (NeurIPS 2025)

使大型推理模型能在其思维链过程中,将深度思考与迭代式网络搜索交织融合,荣获HuggingFace每日论文第一名。

Search-o1 (EMNLP 2025) 引入了智能体搜索增强推理,使模型能在推理过程中自主决定何时检索以及检索何种内容。

HiRA (SIGIR 2026) 通过分层推理将规划与执行解耦,而DeepAgent (WWW 2026) 则将该范式扩展至具备动态可扩展工具集的通用推理智能体。

在训练方法方面,ARPO (ICLR 2026) 提出了专门用于训练智能体的“智能体强化策略优化”方法,在HuggingFace每日及每周论文榜单上均排名第一。

Tool-Star (SIGIR 2026) 通过强化学习赋能多工具协作型网络智能体,

而Tool-Light (ICLR 2026) 推进了用于工具集成推理的自演化偏好学习。

团队还构建了特定领域的深度研究智能体,包括用于金融深度研究的FinSight (ACL 2026)、用于法律推理的LawThinker,

以及用于集成本地与网络知识的企业搜索的HierSearch (AAAI 2026)。

支撑性基础设施工作包括:用于管理长时程智能体搜索的LASER、用于过程奖励引导查询优化的SmartSearch (SIGIR 2026)、

用于学习检索策略的Agentic-R (ACL 2026 Findings),以及一篇关于AI智能体时代中的记忆的全面综述。

Retrieval Augmented Generation(检索增强生成)

Large language models, despite their remarkable generative capabilities, suffer from hallucination, knowledge staleness, and limited ability to provide verifiable, factually grounded answers—particularly in knowledge-intensive domains. Retrieval-augmented generation addresses these shortcomings by retrieving relevant external documents and injecting them into the LLM's generation process, effectively grounding the model's output in real-world evidence.

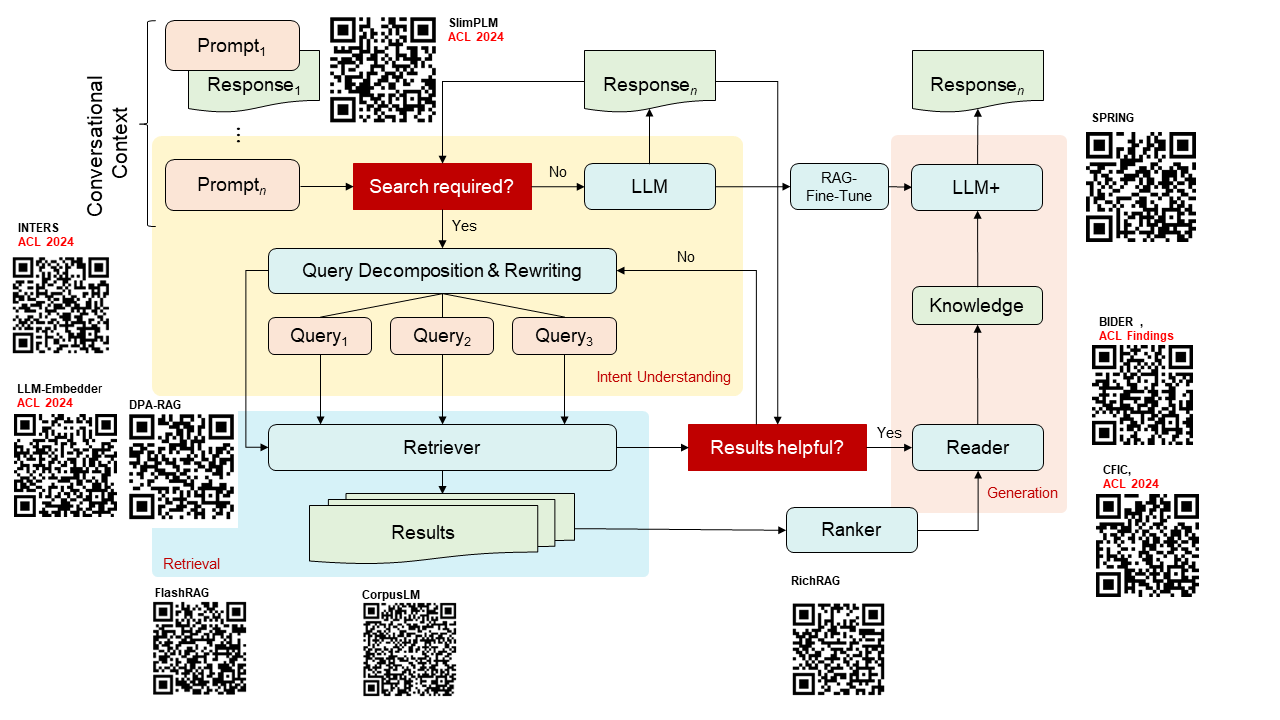

Zhicheng Dou's group has made systematic contributions across the entire RAG pipeline—from retrieval and evidence extraction to generation faithfulness and evaluation. More specifically, the following problems are studied: (1) Necessity of RAG; (2) Query Understanding; (3) Retrieval and Ranking Models; (4) Key Evidence Extraction; (5) LLM Finetuning and (6) Evaluation and Benchmarking.

A landmark contribution is FlashRAG (WWW 2025 Resource, GitHub repository), a modular, open-source Python toolkit that packages 32 benchmark RAG datasets and 14 state-of-the-art RAG algorithms. On the modeling side, HtmlRAG (WWW 2025) demonstrates that using HTML-structured content rather than plain text significantly improves RAG quality by preserving document structure. MemoRAG (WWW 2025) introduces a global memory mechanism to enhance long-context RAG processing. RetroLLM (ACL 2025) integrates retrieval directly into the generation process, enabling LLMs to retrieve fine-grained evidence on the fly. Chain-of-Retrieval Augmented Generation (CoR) (NeurIPS 2025) chains iterative retrieval steps with reasoning, while RoleRAG (EMNLP 2025) innovatively assigns multiple roles (retriever, reranker, generator) to a single LLM via role-specific token optimization. DPA-RAG (WWW 2025) aligns both retriever and generator through dual preference alignment. The group has also contributed important evaluation frameworks: OmniEval (EMNLP 2025) provides omnidirectional RAG evaluation in the financial domain, while HawkBench (NeurIPS 2025 DB) investigates RAG resilience across stratified information-seeking tasks. More recent works include ProRAG (2026) applying process-supervised reinforcement learning to RAG, R³AG (ACL 2026) for retriever routing, TimeRAG (CIKM 2025) for temporal reasoning, and RLSeek (ACL 2026) for evidence-grounded RAG hallucination detection.

尽管大型语言模型具有卓越的生成能力,但它们饱受幻觉、知识陈旧以及在知识密集型领域提供可验证、有事实依据的答案能力有限等问题的困扰。检索增强生成通过检索相关的外部文档并将其注入LLM的生成过程来解决这些缺陷,从而有效地将模型的输出建立在真实世界证据的基础上。

窦志成教授的团队在RAG的整个流程——从检索、证据提取到生成忠实度与评估——做出了系统性研究。

具体而言,研究了以下问题:(1) RAG的必要性;(2) 查询理解;(3) 检索与排序模型;(4) 关键证据提取;(5) LLM微调;(6) 评估与基准测试。

代表性工作:FlashRAG (WWW 2025 Resource, GitHub仓库),

这是一个模块化的开源Python工具包,集成了32个基准RAG数据集和14种最先进的RAG算法。

在建模方面,HtmlRAG (WWW 2025) 证明,使用HTML结构化的内容而非纯文本,通过保留文档结构能显著提升RAG质量。

MemoRAG (WWW 2025) 引入了一种全局记忆机制来增强长上下文RAG处理能力。RetroLLM (ACL 2025) 将检索直接集成到生成过程中,使LLM能够即时检索细粒度证据。

链式检索增强生成 (CoR) (NeurIPS 2025) 将迭代检索步骤与推理相链接,而RoleRAG (EMNLP 2025) 则创新性地通过特定角色的token优化,

将多个角色(检索器、重排序器、生成器)分配给单个LLM。DPA-RAG (WWW 2025) 通过双重偏好对齐来同时对齐检索器和生成器。

团队还贡献了重要的评估框架:OmniEval (EMNLP 2025) 提供了金融领域的全方位RAG评估,而HawkBench (NeurIPS 2025 DB)

则研究了RAG在分层信息寻求任务中的韧性。近期的成果包括:将过程监督强化学习应用于RAG的ProRAG (2026)、用于检索器路由的R³AG (ACL 2026)、

用于时间推理的TimeRAG (CIKM 2025),以及用于基于证据的RAG幻觉检测的RLSeek (ACL 2026)。

Large Language Models for Information Retrieval (LLM + IR)(面向信息检索的大型语言模型)

The integration of large language models (LLMs) into information retrieval (IR) marks a fundamental transition from traditional matching-based frameworks to generation-centric paradigms. Conventional IR systems primarily rely on term matching and static ranking algorithms, which often struggle with semantic gaps, contextual understanding, and complex user intents. LLMs address these limitations by enhancing query understanding, document retrieval, relevance ranking, and answer generation—either as augmentations to existing search engines or as core reasoning engines in generative retrieval architectures.

Zhicheng Dou's research in this direction investigates the deep integration of LLMs into IR systems, going beyond simply using LLMs as black-box rankers to fundamentally rethinking how retrieval, ranking, and understanding can be unified within large-scale language models. The cornerstone of this direction is the survey Large Language Models for Information Retrieval: A Survey (TOIS 2025) (LLM4IR-Survey GitHub repo). On the ranking front, ReasonRank (ACL 2026) empowers passage ranking with strong reasoning ability by having models explicitly reason about relevance before scoring, while DemoRank (TOIS 2026) develops methods for selecting effective in-context demonstrations for LLMs in ranking tasks. The group has also made important contributions to pre-training models specifically tailored for IR: Webformer (SIGIR 2022) pre-trains with web pages for information retrieval, PSLOG (KDD 2023) pre-trains with search logs for document ranking, and Socialformer (WWW 2022) uses social-network-inspired architectures for long document modeling. On the retrieval side, the group explores internalizing explicit reasoning into latent space for dense retrieval (SIGIR 2026) and multi-modal retrieval including e5-omni (ACL 2026 Findings) for omni-modal embeddings, MoCa (ACL 2026) for modality-aware multimodal embeddings, and ATIR (ACL 2026) for audio-text interleaved retrieval.

将大型语言模型整合到信息检索中,标志着从传统的基于匹配的框架向以生成为中心的范式的根本性转变。

传统的信息检索系统主要依赖于术语匹配和静态排序算法,这些算法常常难以应对语义鸿沟、上下文理解以及复杂的用户意图。

大型语言模型通过增强查询理解、文档检索、相关性排序和答案生成来应对这些局限性——它们既可以作为现有搜索引擎的增强组件,也可以作为生成式检索架构中的核心推理引擎。

窦志成教授在此方向上的研究致力于探索LLM与信息检索系统的深度融合,其目标不仅是将LLM作为黑盒排序器使用,而是从根本上重新思考如何在大规模语言模型内部统一检索、排序与理解过程。

团队撰写了综述论文《面向信息检索的大型语言模型:综述》(Large Language Models for Information Retrieval: A Survey) (TOIS 2025)

(LLM4IR-Survey GitHub仓库)。

在排序方面,ReasonRank (ACL 2026) 通过让模型在评分前显式地推理相关性,赋予段落排序强大的推理能力;

而DemoRank (TOIS 2026) 则开发了为排序任务中的LLM选择有效上下文演示的方法。

团队还为专门针对信息检索的预训练模型做出了重要贡献:Webformer (SIGIR 2022) 利用网页进行信息检索的预训练,

PSLOG (KDD 2023) 使用搜索日志进行文档排序的预训练,Socialformer (WWW 2022) 则采用受社交网络启发的架构进行长文档建模。

在检索方面,团队探索了将显式推理内化到稠密检索的隐空间中 (SIGIR 2026),以及多模态检索,包括用于全模态嵌入的 e5-omni (ACL 2026 Findings)、

用于模态感知多模态嵌入的 MoCa (ACL 2026),以及用于音文交错检索的 ATIR (ACL 2026)。

Legal AI

The legal domain presents a compelling application area for information retrieval and natural language processing due to its massive volumes of textual data—statutes, case law, court opinions—and the critical need for precision, interpretability, and explainability. Unlike general web search, where a short query is matched against relatively self-contained documents, legal case retrieval requires comparing lengthy, highly specialized case descriptions where relevance goes far beyond superficial semantic similarity: determining whether two cases are truly analogous demands understanding the specific legal elements—the constituent facts that establish a particular charge—and the applicable statutes underpinning each case. Meanwhile, legal judgment prediction—automatically predicting the charge, applicable law articles, and penalty term from a case's factual description—faces the acute challenge that many charges are confusingly similar (e.g., theft vs. robbery, fraud vs. contract fraud), making it easy for models to conflate them without fine-grained discriminative reasoning. Zhicheng Dou's group has systematically addressed both tasks through a series of increasingly sophisticated approaches, progressing from element-level legal knowledge modeling through LLM-based discriminative reasoning to autonomous agentic legal research systems.

Zhicheng Dou's group has pursued two primary tasks within Legal AI: legal case retrieval (finding the most relevant precedent cases for a given query case) and legal judgment prediction (predicting the outcome of a case based on its factual description). Contrastive Learning for Legal Judgment Prediction (TOIS 2023) introduces contrastive pre-training strategies tailored to the legal domain, learning to distinguish between similar but legally distinct cases. Case Retrieval for Legal Judgment Prediction (CCL 2023) integrates retrieval into the prediction pipeline, while Few-Shot Charge Prediction with Multi-grained Features (CCL 2021) addresses the practical challenge of rare charges with limited training examples.

A recent contribution is Elem4LCR (ACL 2024 Findings), which reveals that legal elements can dramatically improve relevance matching in legal case retrieval. The paper constructs a Chinese legal element dataset called LeCaRD-Elem—containing 107 query cases and 9,088 candidate cases with annotated legal elements—through a two-stage semi-automatic method, and proposes two models: Elem4LCR-E, which explicitly predicts legal elements before ranking, and Elem4LCR-I, an end-to-end model that internalizes legal element knowledge via a tailored teacher-student training framework. The code and dataset are publicly released with the dataset available on HuggingFace.

Building on this element-level understanding, KELLER (EMNLP 2024 Main, pages 1253–1265) tackles interpretability in retrieval by leveraging LLMs augmented with professional legal knowledge about crimes and law articles to reformulate original lengthy legal cases into concise sub-facts of crimes, preserving essential information while making the retrieval process transparent to legal practitioners. Extensive experiments on two legal case retrieval benchmarks demonstrate KELLER's superior retrieval performance and robustness on complex legal case queries.

On the judgment prediction side, ADAPT (EMNLP 2024 Findings) introduces a reasoning framework that mirrors human judicial reasoning through three stages: Ask (decomposing case facts into structured elements), Discriminate (distinguishing among confusingly similar potential charges), and Predict (arriving at the final judgment). ADAPT addresses a key weakness of LLMs in the legal domain—their tendency to confuse similar charges—by explicitly training discriminative reasoning capabilities through multi-task synthetic trajectories.

Most recently, the group has brought the agentic paradigm into the legal domain. GLARE introduces an agentic legal reasoning framework where the system dynamically acquires key legal knowledge by invoking different modules—statute retrieval, case retrieval, and element analysis—during its reasoning chain, improving both the breadth and depth of legal reasoning while generating interpretable reasoning traces. LawThinker (arXiv 2026) extends this paradigm into a full deep research legal agent operating in dynamic judicial environments with evolving legal databases. LawThinker adopts an Explore-Verify-Memorize strategy with a DeepVerifier module that examines each retrieval result along three dimensions—knowledge accuracy, fact-law relevance, and procedural compliance—with a memory module for cross-round knowledge reuse in long-horizon tasks. Experiments on the dynamic J1-EVAL benchmark show a 24% improvement over direct reasoning and an 11% gain over workflow-based methods.

Together, these works demonstrate a clear research arc from element-level legal knowledge modeling through LLM-based discriminative reasoning to autonomous agentic legal research systems, showing how techniques from IR and LLM research can be meaningfully applied to high-stakes, domain-specific scenarios where accuracy, interpretability, and adherence to legal reasoning standards are paramount.

Generative Retrieval

Traditional information retrieval systems rely on a two-stage pipeline: first building an explicit index over a document corpus, then searching that index to retrieve candidates. Generative retrieval fundamentally reimagines this paradigm by replacing the explicit index with an end-to-end neural model that directly generates document identifiers given a query, effectively memorizing the entire corpus within the model's parameters. This approach promises tighter integration of retrieval with generation, enabling unified modeling that can better leverage the reasoning capabilities of large language models.

Zhicheng Dou's group has been a leading force in advancing generative retrieval along multiple fronts, as reflected in their authoritative survey From Matching to Generation: A Survey on Generative Information Retrieval (TOIS 2025), with an accompanying GenIR-Survey GitHub repo. A foundational challenge is designing effective document identifiers—the tokens or codes that the model generates to "point to" a document. NOVO (CIKM 2023) introduces learnable and interpretable document identifiers for model-based IR, while D2-DocID (AAAI 2025) proposes descriptive and discriminative document identifiers that capture both semantic content and uniqueness. On the system level, CorpusLM (SIGIR 2024) builds a unified language model on an entire corpus for knowledge-intensive tasks, and UniGen (AAAI 2024) presents a unified generative framework covering both retrieval and question answering. ROGER (TOIS 2024) addresses ranking-oriented generative retrieval. Earlier work like Ultron and DynamicRetriever explored pre-trained model-based IR systems without explicit indexes. These contributions collectively establish a research arc from foundational document representation design through training methodology to practical system building, positioning generative retrieval as a viable alternative to traditional index-based approaches.

Conversational Search

Traditional search engines are designed for single-turn interactions: a user types a query, receives a ranked list, and the session ends. In reality, complex information needs often unfold over multiple dialogue turns—a user asks a question, receives an answer, and then follows up with refinements, clarifications, or entirely new sub-questions. Conversational search addresses this gap by supporting multi-turn information-seeking dialogues, where the system must continuously track context, resolve coreferences and ellipses, and understand evolving search intent. Zhicheng Dou's group has systematically tackled the key challenges of this paradigm. One fundamental problem is conversational query understanding: ConvTrans (EMNLP 2022) transforms web search sessions for conversational dense retrieval, while Conversational Query Editing (ACL 2023 Findings) explicitly rewrites conversational queries to make them self-contained. ChatRetriever (EMNLP 2024) adapts LLMs for generalized and robust conversational dense retrieval, and LLMs Know Your Contextual Search Intent (EMNLP 2023 Findings) demonstrates that LLMs can be prompted to understand multi-turn search intent. The group's work on Learning Denoised and Interpretable Session Representation (WWW 2023, Spotlight) received a Paper Award Nomination for its innovative approach to modeling noisy conversational contexts. On the proactive side, Multi-turn Clarification Generation (WWW 2024) and ClariLM (CIKM 2025) focus on generating clarifying questions to resolve ambiguity. More recently, the group has extended conversational search into the RAG era with CORAL (NAACL 2025 Findings) for multi-turn conversational RAG, and ConvRAG (CIKM 2025) using evolving graph-based context modeling. The group also authored a comprehensive 50-page survey, A Survey of Conversational Search (TOIS 2025), providing a definitive reference for the field.

Personalized Search, Recommendation, and Dialogue

Search engines face a fundamental challenge: the vast majority of user queries are short and ambiguous, with different users harboring completely different information needs behind identical query strings. Zhicheng Dou has been a pioneering researcher in personalized search since the early stages of his career, beginning with his seminal large-scale evaluation of personalized search strategies (WWW 2007), which is his most-cited work. This foundational study revealed that personalization is most effective for ambiguous queries with high click entropy but may actually hurt performance on clear queries. Building on this, his group developed increasingly sophisticated methods: HRNN (CIKM 2018) models dynamic user profiles using hierarchical RNNs with query-aware attention, PSGAN (SIGIR 2019) applies generative adversarial networks to handle limited and noisy click data, and Knowledge Enhanced Personalized Search (SIGIR 2020) incorporates external knowledge graphs. PSSL (CIKM 2021) introduces self-supervised contrastive learning, and FedPS (WWW 2021) applies federated learning to protect user privacy while enabling personalization. The personalization theme extends to dialogue systems: One Chatbot Per Person (SIGIR 2021) creates personalized chatbots based on implicit user profiles, supported by the Pchatbot dataset. For recommendation, the group developed cognition-aware knowledge graph reasoning (WSDM 2023), dynamic negative sampling (WWW 2022), and unified search-recommendation models, and recent works like RecThinker (2026) bring agentic reasoning to recommendation. This direction represents Dou's longest-running research thread, evolving from classical web search personalization into modern LLM-era personalized and interactive systems.

Search Result Diversification

When a search query is ambiguous or multi-faceted, returning a ranked list dominated by a single interpretation risks failing users whose intent differs from the majority. Search result diversification ensures the top results cover as many distinct subtopics or user intents as possible. Zhicheng Dou has been one of the most active researchers in this area, with contributions spanning subtopic mining, diversification algorithms, and evaluation methodologies since 2011. His early work on Multi-dimensional Search Result Diversification (WSDM 2011) generated the best run in the TREC 2009 Web Track diversity task. The seminal unified evaluation framework (SIGIR 2013, Best Paper Runner-Up Award) unified summaries, ranked retrieval, and sessions under a single evaluation paradigm, influencing how the community measures search effectiveness. Learning to Diversify via Subtopic Attention (SIGIR 2017, TKDE 2018) introduced neural attention mechanisms for supervised diversification. DVGAN (SIGIR 2020) combined explicit and implicit features through a GAN-based framework, while GDESA (TOIS 2023) proposed a greedy diversity encoder with self-attention. Knowledge Enhanced Search Result Diversification (KDD 2022) leveraged external knowledge graphs. Recent works include A Model-agnostic Pre-training Framework for Search Result Diversification (TOIS 2026) and the FairDiverse toolkit (SIGIR 2025) for fairness- and diversity-aware IR. The group also contributed extensively to query facet mining—discovering the underlying dimensions of a query from search results—with multiple papers in TKDE (2016, 2017).

Query Facet Mining

We address the problem of finding multiple groups of words

or phrases that explain the underlying query facets, which

we refer to as query dimensions/facets. We assume that the important aspects of a query are usually presented and repeated in

the query’s top retrieved documents in the style of lists, and

query facets can be mined out by aggregating these significant lists.